Business Goal

The goal of the project is to analyze logs from our website. We want to generate the type of reports that you produce with a typical web analytics tool (Google Analytics for example).

The original data was collected with the open-sourced web tracker WT1. It contains one month of data (March 2014). Note that the IP addresses have been anonymized (random values).

How We Do This

To build this project, we have a single data source: the web logs collected by our website.

Explore This Sample Project

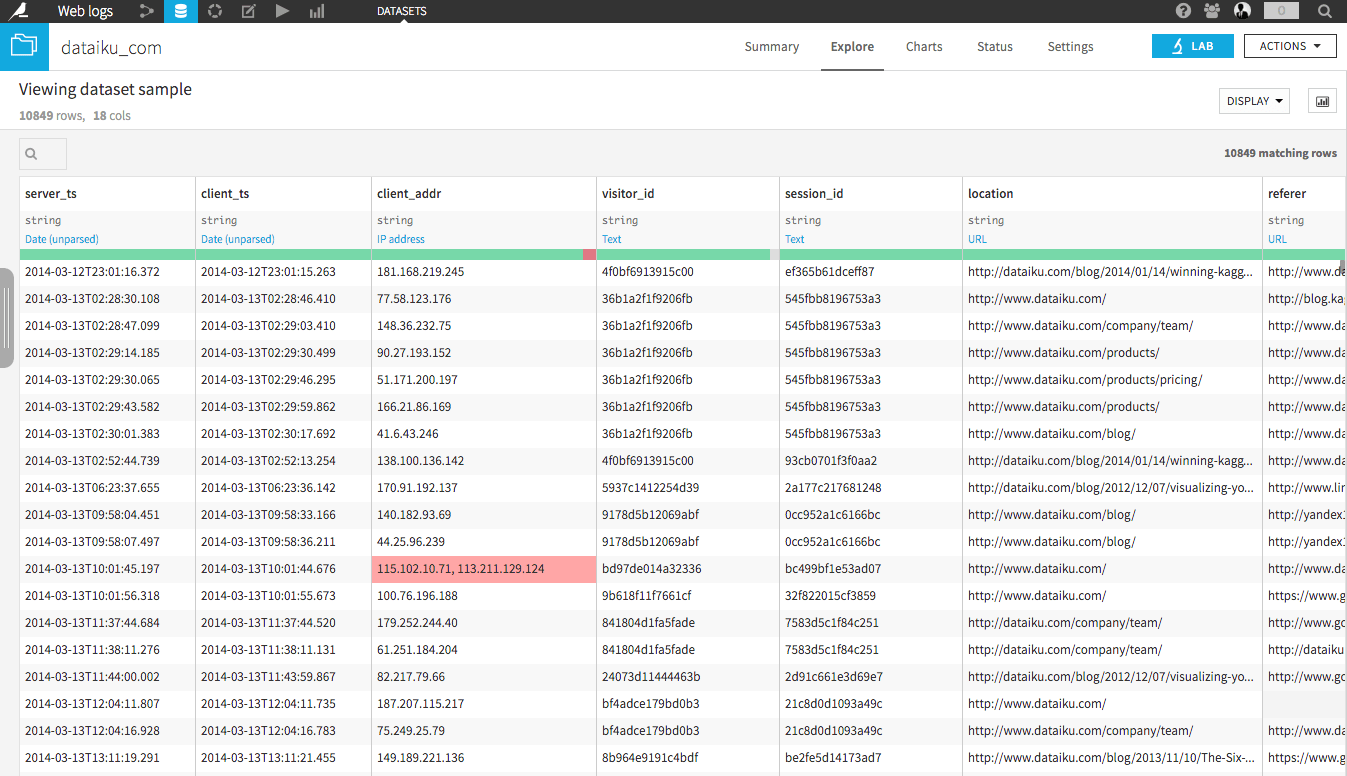

Input data

We've uploaded our file-system dataset containing the original logs collected by WT1. The dataset is partitioned by day. Each line of the dataset is one page view from the website by one person. The "location" column contains the URL of the page visited.

EXPLORE !

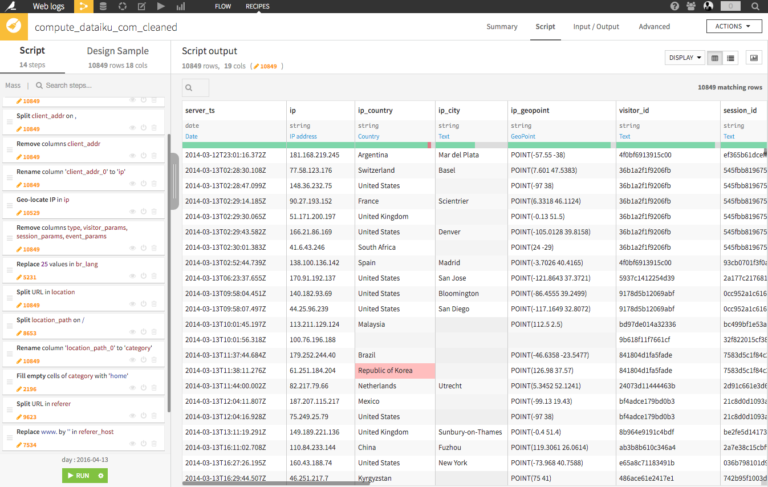

Cleaning recipe

After we upload the data, we start by parsing the date, we cleaning our data and geo-locating our IP address column? We then clean the referer column and create a 'category' column based on the URL.

EXPLORE !

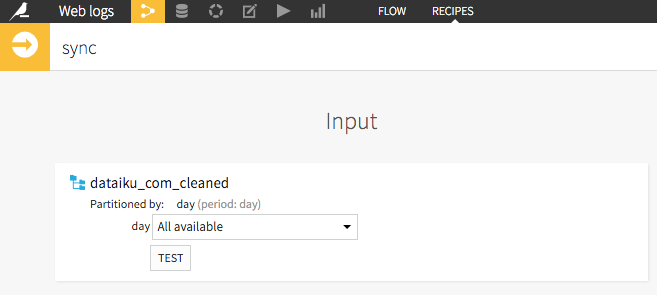

Sync recipe

In the second step, we sync the data on a PostgreSQL database (so we can write SQL, and so our calculations and graphs run faster) . Note that we also un-partitionning the dataset (it's easier for this project).

EXPLORE !

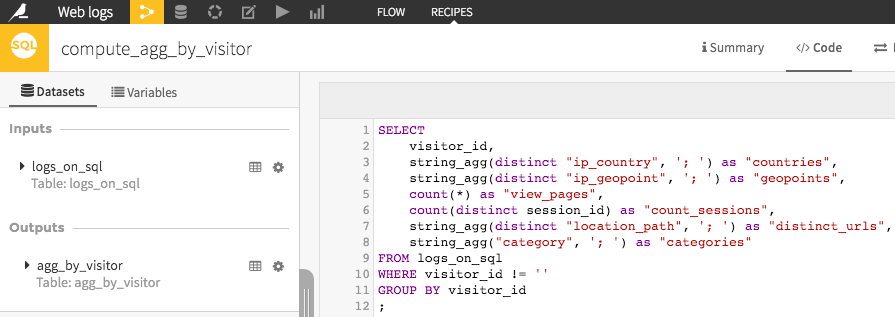

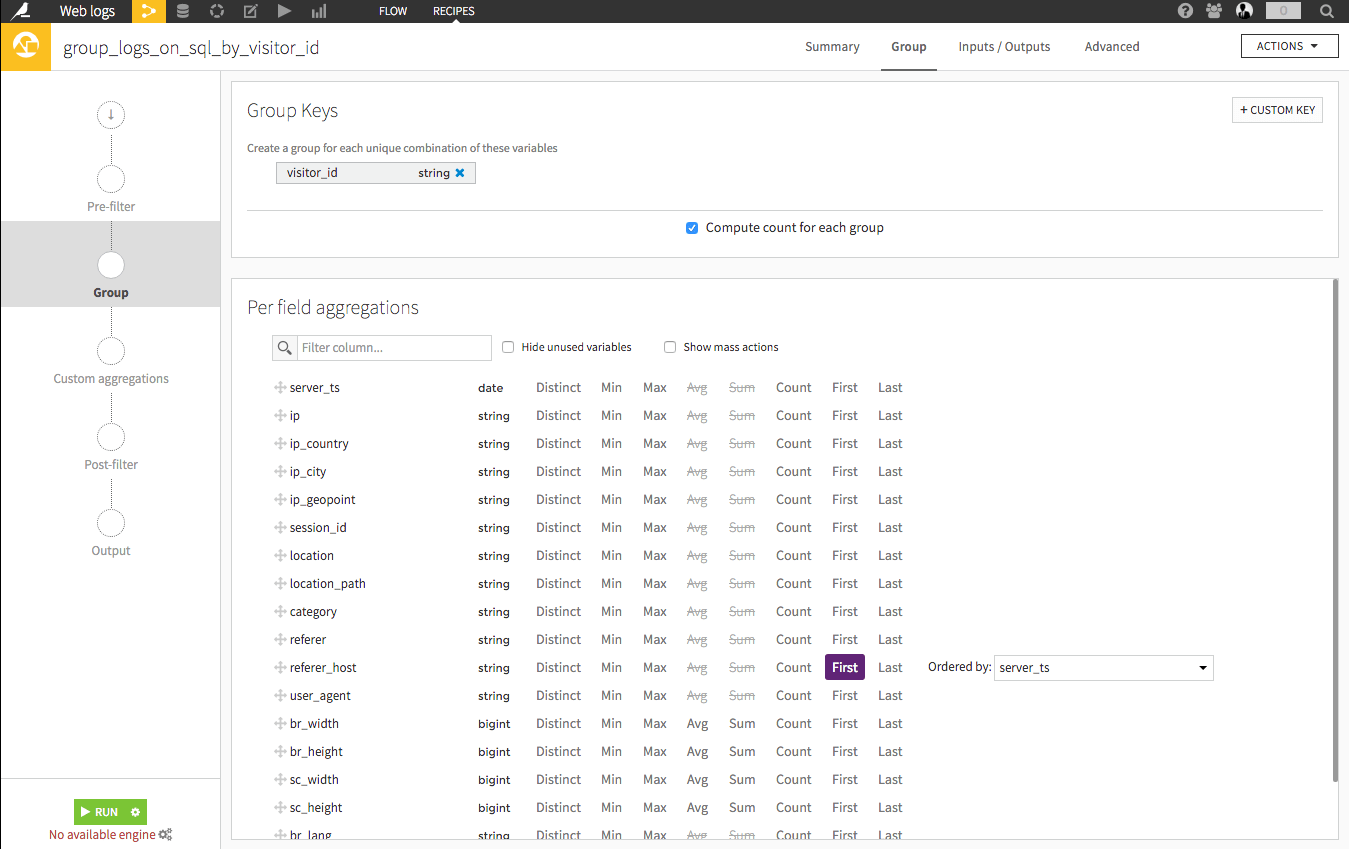

SQL Group recipe

Then, our project splits into two branches. In our first branch, we group our dataset by visitor_id using SQL, so we can get all the info on each of our visitors.

Explore !

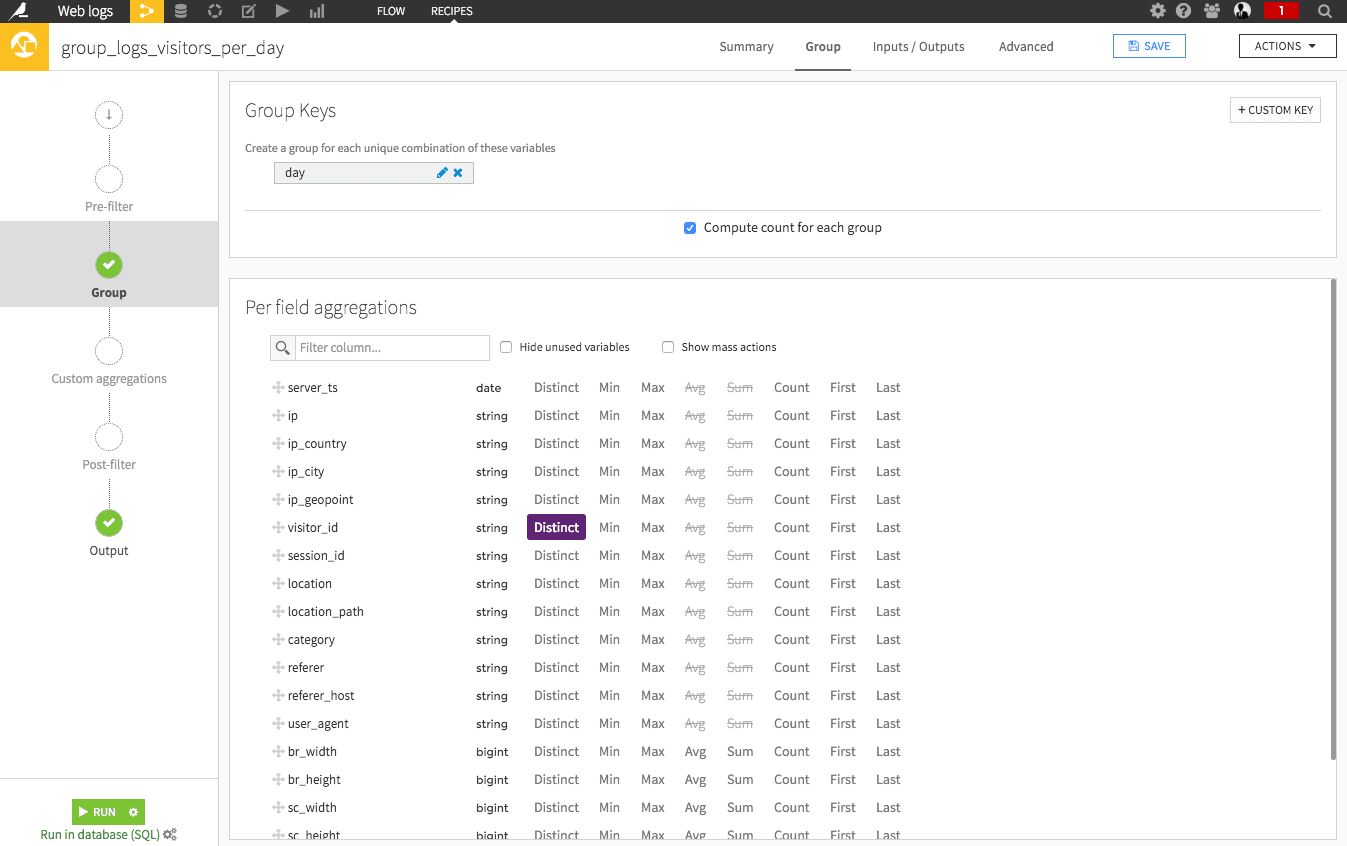

Visual Group recipe 1

Next, use a visual group by recipe to get the first referrer per visitor-id. This gives us each visitor's point of entry on the site.

Explore !

Visual Group recipe 2

After this, we join those two datasets, and clean them to build a map on them (by creating one observation per geographic point). In the other branch, we just group our data by day so we can get the number of visitors per day.

Explore !

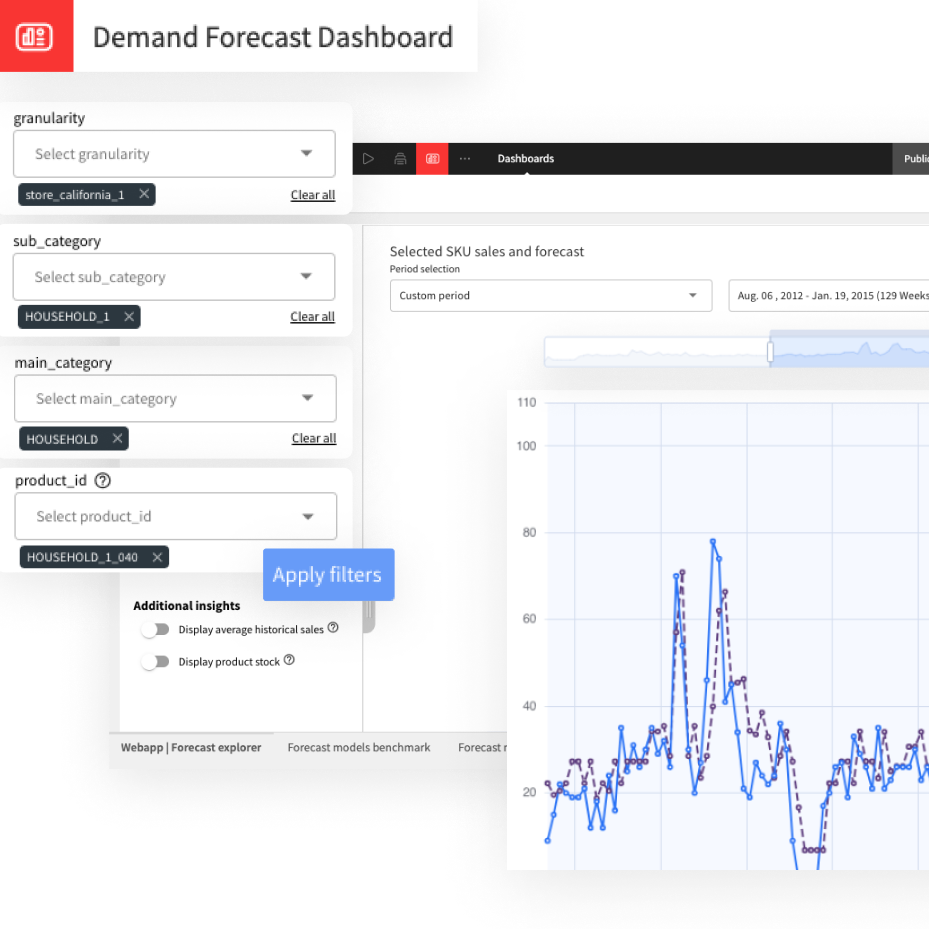

Dashboard

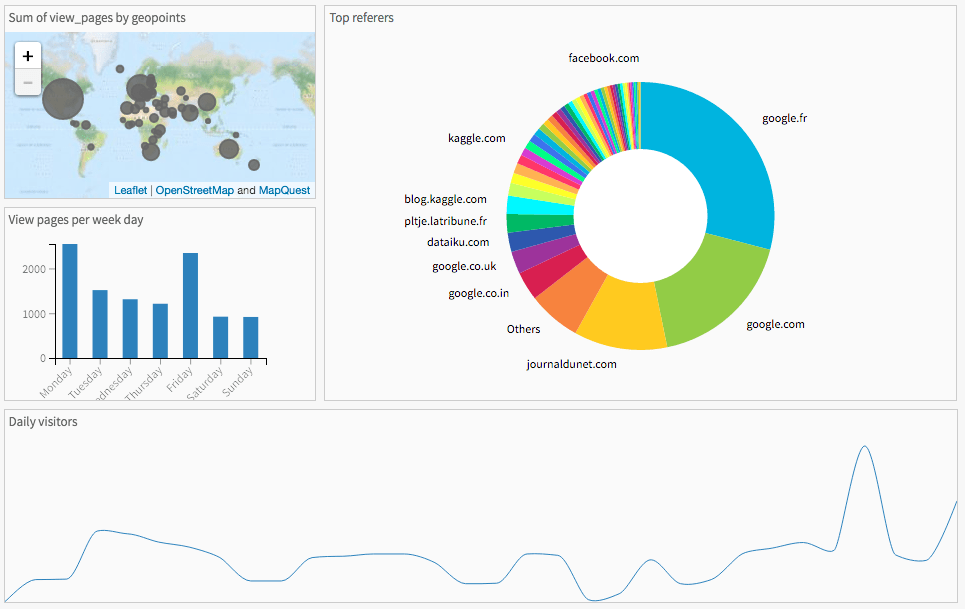

On these different datasets, we crated some graphics. All of them are attached on the dashboard. You can find:

- pages views per location

- pages views per week day

- top referers

- daily visitors

Explore !