The observability gap in agentic systems

Traditional software follows predictable execution paths. Engineers can test inputs, validate outputs, and reproduce errors consistently. But agentic systems behave differently.

AI agents operate probabilistically, interact with multiple services, and adapt to changing inputs. The same request may follow different reasoning paths depending on a variety of factors like context or data availability.

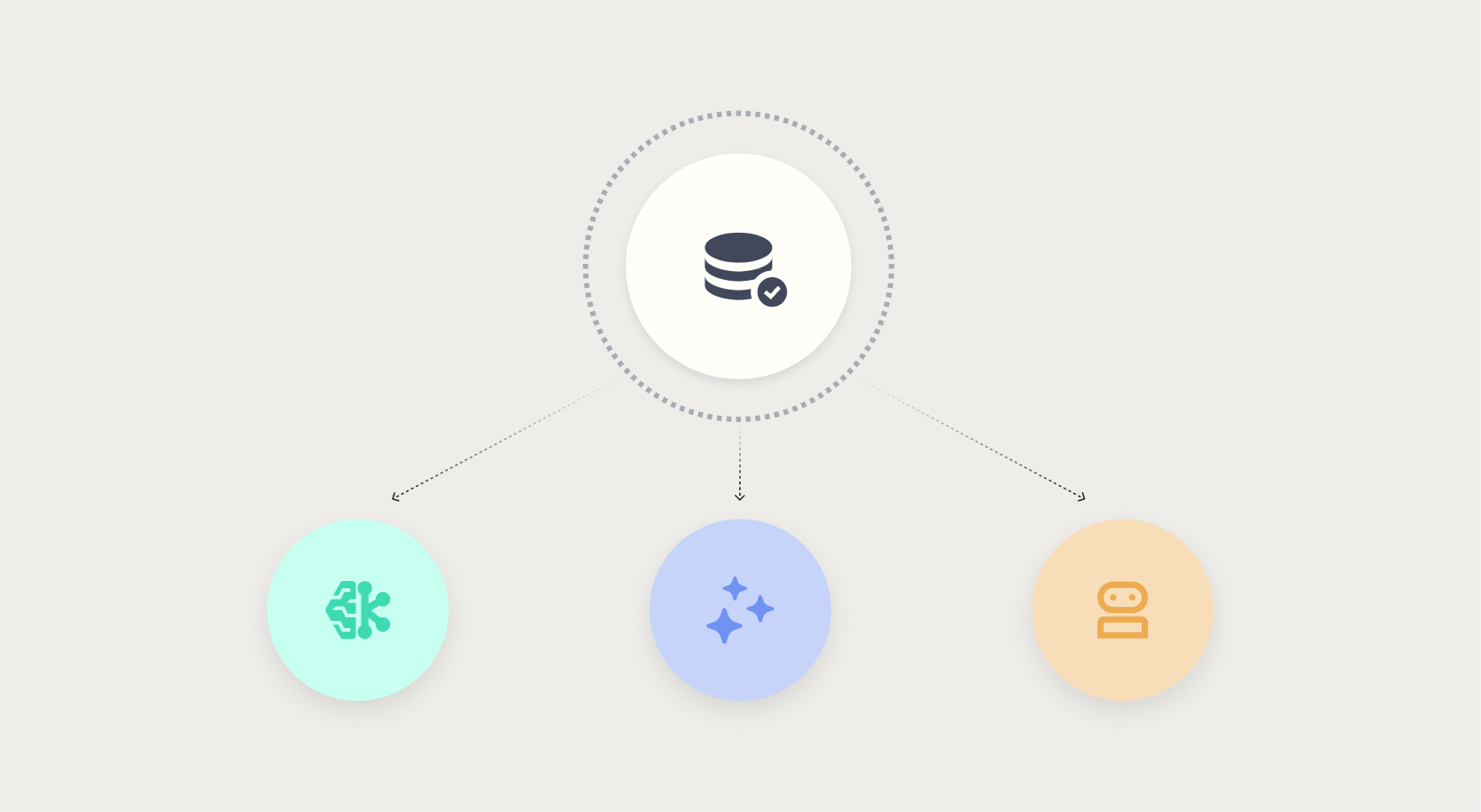

In multi-agent environments, complexity compounds quickly. Agents call other agents. Tools trigger additional workflows. External APIs introduce dependencies outside the system boundary.

Without observability, teams face down a familiar sequence:

1. A system appears healthy.

2. Users report incorrect results.

3. No one knows why.

Observability closes that gap by capturing signals from every step of the AI workflow.

So instead of asking whether the system is running, teams can answer deeper questions:

- Which tools did the agent call?

- What prompts and context influenced the output?

- Where did latency occur in the workflow?

- Which component introduced the error?

The goal exceeds simple monitoring, instead emerging as true system transparency.

.png?width=928&height=620&name=Thumbnail%20link%20(2).png)

.png)

.png)