The core idea: context as environment

In a standard LLM interaction, the context window is a static container. You put things in, the model processes them, you get an output. In a recursive architecture, the context is treated more like an interactive environment, something the model can probe, query, and navigate rather than simply receive.

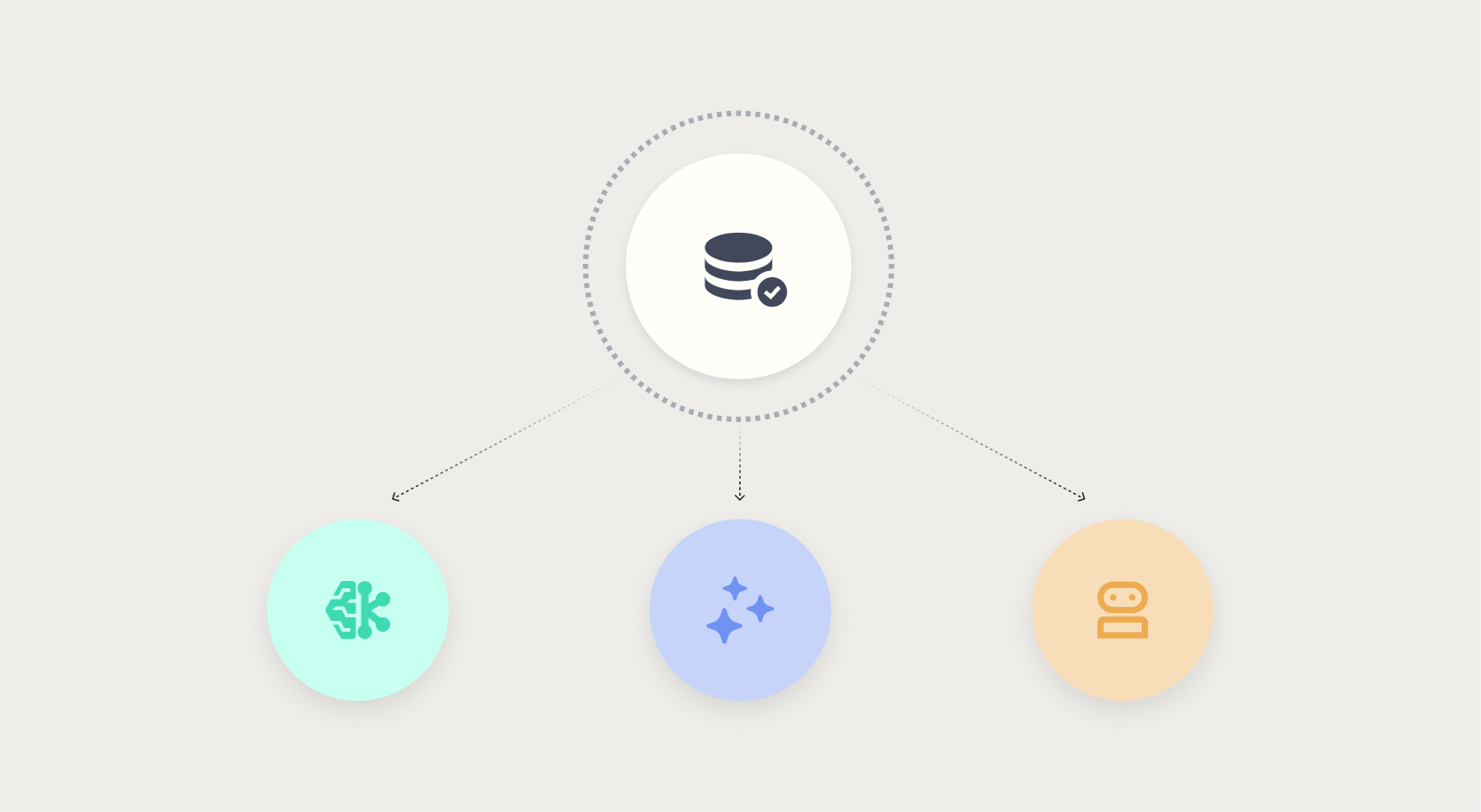

The mechanism is tool use. Rather than receiving a pre-loaded context containing all potentially relevant information, the model is given tools that allow it to retrieve information on demand: search functions, code execution environments, database query interfaces, document browsers.

When the model needs to know something, it calls the appropriate tool, receives a targeted result, and incorporates that result into its reasoning. The context at any given moment contains only what the model has actively retrieved, not everything that might conceivably be relevant.

This shifts the architecture from passive reception to active exploration. The model becomes an agent that builds its own context through a sequence of targeted queries, rather than a passive processor of a pre-assembled information package.

For tasks that require reasoning across large information spaces, including deep research, codebase analysis, and complex multi-document synthesis, this can be dramatically more effective than trying to fit everything into a single prompt.

.png?width=928&height=620&name=Thumbnail%20link%20(2).png)

.png)