What are AI agents?

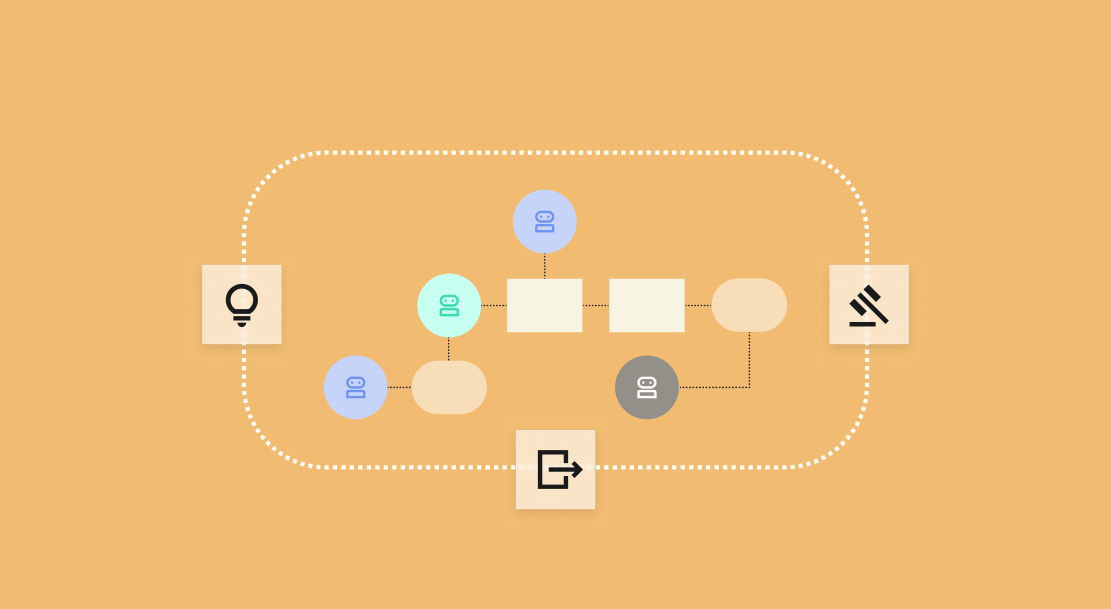

An AI agent is a system that uses a language model to perceive its environment, plan a sequence of actions, and execute those actions using external tools, adjusting its approach based on results. Unlike a chatbot responding to a single prompt or a Robotic Process Automation (RPA) bot following a fixed script, an agent determines its own next step based on context.

Autonomy exists on a spectrum:

- Assisted agents suggest actions for human approval.

- Semi-autonomous agents execute routine decisions but escalate exceptions.

- Supervised autonomous agents handle full workflows independently within defined guardrails, with human oversight at checkpoints rather than every step.

The core loop is perception, planning, and action. An agent perceives its environment (reads a support ticket, queries a database), plans its response (identifies the issue, selects tools, sequences steps), and acts (updates a record, sends a message, triggers a workflow). That loop repeats until the task is complete or the agent escalates.

Click on the image above to zoom into full PDF

Why are enterprises investing in AI agents?

A Dataiku survey of 600 enterprise CIOs found that for nearly all CIOs (87%), AI agents are already embedded in the enterprise in some way: 62% say agents are embedded in some business-critical workflows, and 25% say agents are the operational backbone of many critical workflows. Broader industry data confirms the trend. McKinsey's 2025 global survey found 23% of organizations actively scaling agentic AI, with an additional 39% in experimental phases.

Three outcomes drive this investment:

1. Operational efficiency through automated workflows

2. Decision acceleration via real-time reasoning

3. Ability to scale expertise across geographies and time zones

Enterprise use cases cluster around:

1. Customer operations, to handle inquiries at scale.

2. Analytics automation, to generate reports without manual intervention.

3. Knowledge retrieval, to surface answers from unstructured documents.

But the investment case carries a warning. According to Gartner®, "over 40% of agentic AI projects will be canceled by the end of 2027 due to escalating costs, unclear business value, or inadequate risk controls."

The pattern played out publicly at Klarna: In 2024, the fintech deployed an AI agent to handle 75% of customer service chats (2.3 million conversations in the first month), and claimed it replaced 700 full-time agents. Klarna later emphasized a hybrid model after acknowledging limitations in fully automated support.

The lesson: Agents that work in demos fail in production when they lack governance, monitoring, and human escalation paths.

Core building blocks of an AI agent

Four capabilities define what an agent can do. Use the mnemonic Red Turtles Paint Murals: reflection, tool use, planning, and multi-agent collaboration.

1. Reflection is the agent's ability to evaluate its own outputs and correct course.

Example: A financial analysis agent generates a revenue forecast, checks it against historical baselines, spots an anomaly in its assumptions, and reruns the calculation with corrected inputs.

2. Tool use is how agents interact with external systems through APIs, databases, and services.

Example: A procurement agent receives an order request, queries the supplier database, compares pricing across vendors, and generates a purchase order, completing each step using a different tool.

3. Planning is the capacity to decompose a complex goal into sequenced steps.

Example: A compliance agent assigned to review a vendor contract identifies the clauses to check, retrieves the relevant regulatory requirements, cross-references the vendor's certifications, and produces a flagged risk summary.

4. Multi-agent collaboration is how specialized agents delegate tasks to each other.

Example: A customer onboarding system might use a document extraction agent for uploaded files, a verification agent for identity validation, and a provisioning agent for account setup, coordinated by an orchestrator.

How to choose the right tooling approach to build AI agents

Enterprises typically choose between no-code platforms for business users building simple agents, low-code environments for semi-technical teams extending pre-built components, and code-based frameworks for developers with full control.

Many organizations use all three, with business teams handling departmental tasks while engineering builds complex multi-agent systems.

Four criteria drive the decision:

Click on the image above to zoom into full PDF

Moving AI agents from prototype to production pipelines

The gap between a working demo and a reliable production system is where most agent projects die. MIT's 2025 State of AI in Business report, based on a review of 300+ enterprise AI initiatives, found that only 5% of organizations are translating AI pilots into measurable business impact.

Enterprise surveys reveal that most organizations abandon a significant portion of AI proofs-of-concept before production, highlighting persistent scaling challenges.

Closing that gap means treating agents like software. Separate environments for development, testing, and production prevent untested changes from reaching users. Version every component: prompts, tool configurations, agent logic, and guardrail rules. Prompt regressions are a real risk; a small wording change can alter behavior across thousands of interactions.

CI/CD pipelines for agents should include automated testing (does the agent handle baseline scenarios correctly?), cost estimation (will this change increase API spend?), and security scanning (are new tool connections authenticated?). No agent should reach production without passing these checks.

Governance, security, and risk management for AI agents

AI agents introduce risk vectors that traditional model governance does not fully cover. Unlike static models, agents can take action: With API access, they can move data, trigger transactions, and communicate with customers. This can multiply the blast radius of a bad decision. Moreover, in multi-agent chains, one agent’s drift can cascade across workflows, amplifying errors rather than containing them.

For that reason, access control must follow the principle of least privilege. Agents should access only the data and systems required for their specific task, nothing more.

At the same time, auditability is essential. Logging every decision, tool call, and output ensures that any action can be traced back to the prompt, context, and model that produced it. In regulated industries, especially, this trace becomes the evidence base for compliance reviews and incident investigations.

Even with strong access controls, hallucinations and unsafe outputs remain a risk. Managing them requires layered defenses. Input guardrails filter malicious or out-of-scope prompts before they reach the agent. Output guardrails check for PII exposure and policy violations before responses are delivered. Evaluator models then double-check high-stakes decisions before the agent acts.

Finally, every production agent should have a kill switch, a controlled way to immediately disable the system if behavior drifts outside defined boundaries.

Monitoring and evaluating AI agents in production

Once an AI agent is deployed, its real test begins, so performance, safety, and cost must be continuously measured in live environments. To ensure reliability at scale, enterprises should focus on five core monitoring practices:

1. Define success metrics tied to business outcomes. Establish clear targets such as resolution rate for a service agent, accuracy for an analytics agent, or time-to-completion for a process agent. Without defined benchmarks, it’s difficult to distinguish normal variance from true performance issues.

2. Log every decision path. Agent trace tools should capture the full sequence of perception, planning, tool calls, and outputs. This makes it possible to replay any interaction and identify precisely where the agent failed, especially when rare but high-impact errors occur.

3. Maintain human review loops. Assign subject matter experts to regularly review a sample of agent outputs. Their feedback helps refine prompts, tool configurations, and guardrails, preventing silent performance drift.

4. Track operational and cost signals. Monitor token usage, API calls, and compute cost per interaction to detect runaway spending early and maintain financial control over deployments.

5. Set automated rollback triggers. If error rates spike or behavior moves outside defined guardrails, the system should revert automatically rather than waiting for manual intervention.

Common pitfalls enterprises face when building AI agents

Five patterns consistently derail agent initiatives. The preventive principle: Treat every agent as a living system requiring ongoing investment in monitoring, governance, and iteration.

Click on the image above to zoom into full PDF

How to get started with AI agents responsibly

For enterprises just beginning to build AI agents, the responsible starting point is a constrained, high-value workflow where manual effort is clear and automation risk is manageable. Service ticket triage, document summarization, and internal knowledge retrieval are common first use cases because they are repetitive, time-consuming, and low-risk.

Define success criteria before building. If a service agent should resolve 60% of tier-one tickets without escalation at 95% satisfaction, write that down and measure against it. Pilot in assisted mode first, semi-autonomous only after validation, fully autonomous only after governance is in place.

Scaling requires alignment across IT, data, and business teams. IT owns infrastructure and security, data teams own model performance and monitoring, and business teams own use-case definition and success measurement. Without a governed platform connecting all three, scaling from one agent to ten creates the same fragmentation that stalls most initiatives.

Build AI agents on a foundation that scales

The pattern across every section of this guide is the same: Agents fail not because the technology is immature, but because the infrastructure around them is fragmented. Building happens in one tool, governance in another, monitoring in a third, and deployment in a fourth. That fragmentation is what turns a successful pilot into a stalled initiative.

Dataiku, the Platform for AI Success, brings agent creation, governance, monitoring, and deployment into a single platform where business teams, data teams, and IT work in the same environment. No-code and code-based agent building, built-in guardrails, trace-level auditability, and enterprise-grade access controls mean agents move from prototype to production without switching tools or losing visibility.

.png?width=1111&height=609&name=how%20to%20build%20production-ready%20AI%20agents%20(1).png)

.png?width=928&height=620&name=Thumbnail%20link%20(2).png)

.png)