FAQs about machine learning and data quality

What is the minimum data quality baseline before deploying AI?

At a minimum, you need accuracy and completeness rates that are measurable and documented, not assumed. That means profiling every dataset that feeds the model, establishing baseline metrics, and defining thresholds below which deployment is blocked. There's no universal number, but if you cannot quantify the quality of your training data, you're not ready to trust the model it produces.

How does data quality impact generative AI hallucinations?

Generative AI models retrieve and synthesize content from source material. When that material contains inaccuracies, contradictions, or outdated information, the model weaves those defects into fluent, confident responses that look correct but are not. Better source data reduces the most preventable class of hallucinations, the ones that trace directly back to inaccurate or outdated source documents.

Why is data quality especially critical for agentic AI systems?

Because agents act on data autonomously. In a traditional ML system, a bad prediction sits in a dashboard until a human reviews it. In an agentic system, that same bad prediction triggers an action that feeds into the next decision and compounds from there.

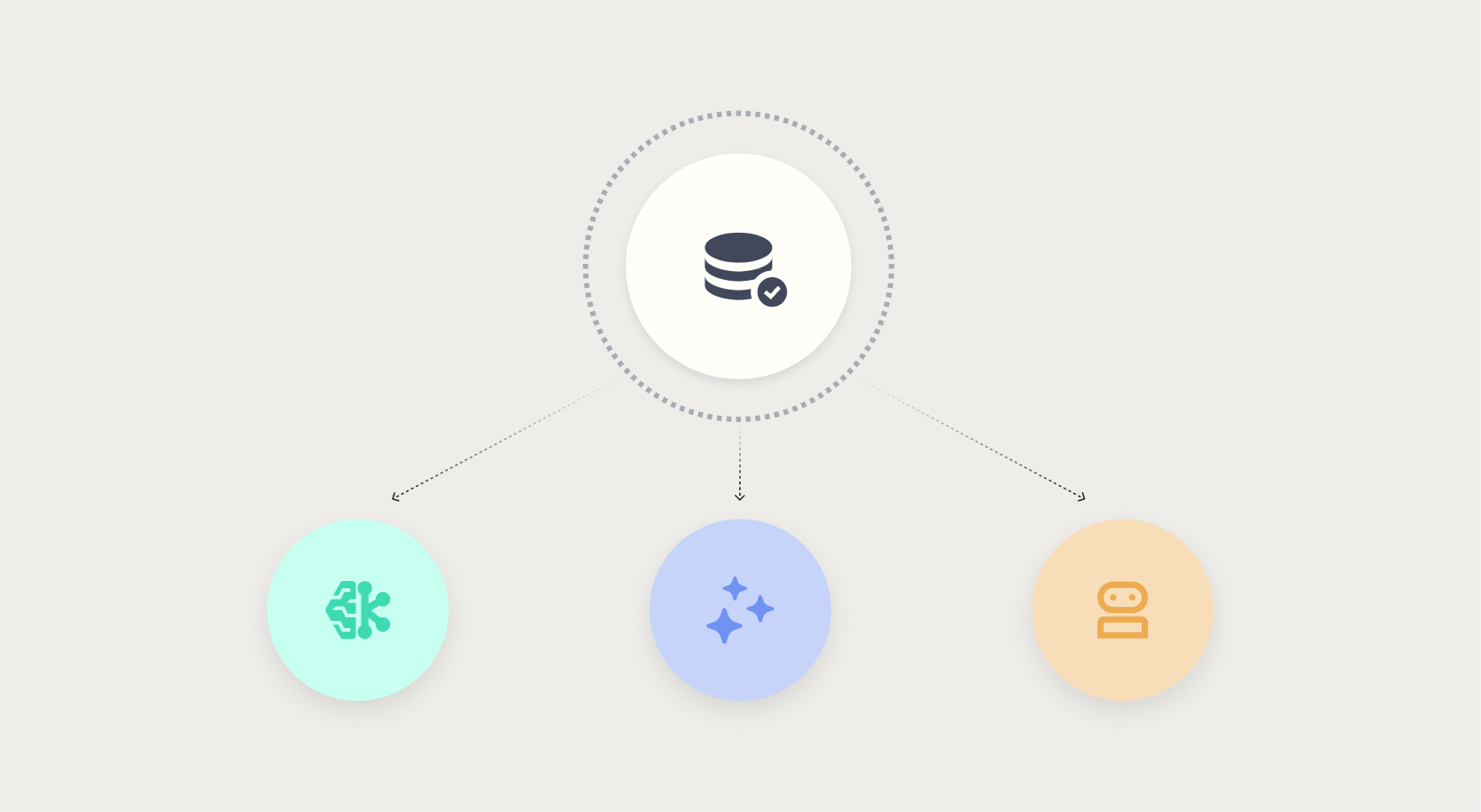

How do enterprises balance rule-based and ML-driven quality checks?

Rule-based checks handle known, stable patterns: format validation, range checks, and required fields. ML-driven checks handle novel or evolving patterns: anomaly detection, distribution drift, and cross-field dependencies that are too complex to codify manually. Most mature organizations run both in parallel, with rule-based checks as the first pass and ML-driven checks as the adaptive layer.

How can organizations measure ROI from data quality initiatives?

Track the downstream costs that data quality prevents: model retraining frequency, production incident rates, manual reconciliation hours, regulatory remediation costs, and the number of AI projects delayed or abandoned due to data trust issues. Even reducing that stall rate by a fraction translates to significant time and budget recovered.

.png?width=928&height=620&name=Thumbnail%20link%20(2).png)

.png)

.png)