What is a machine learning platform?

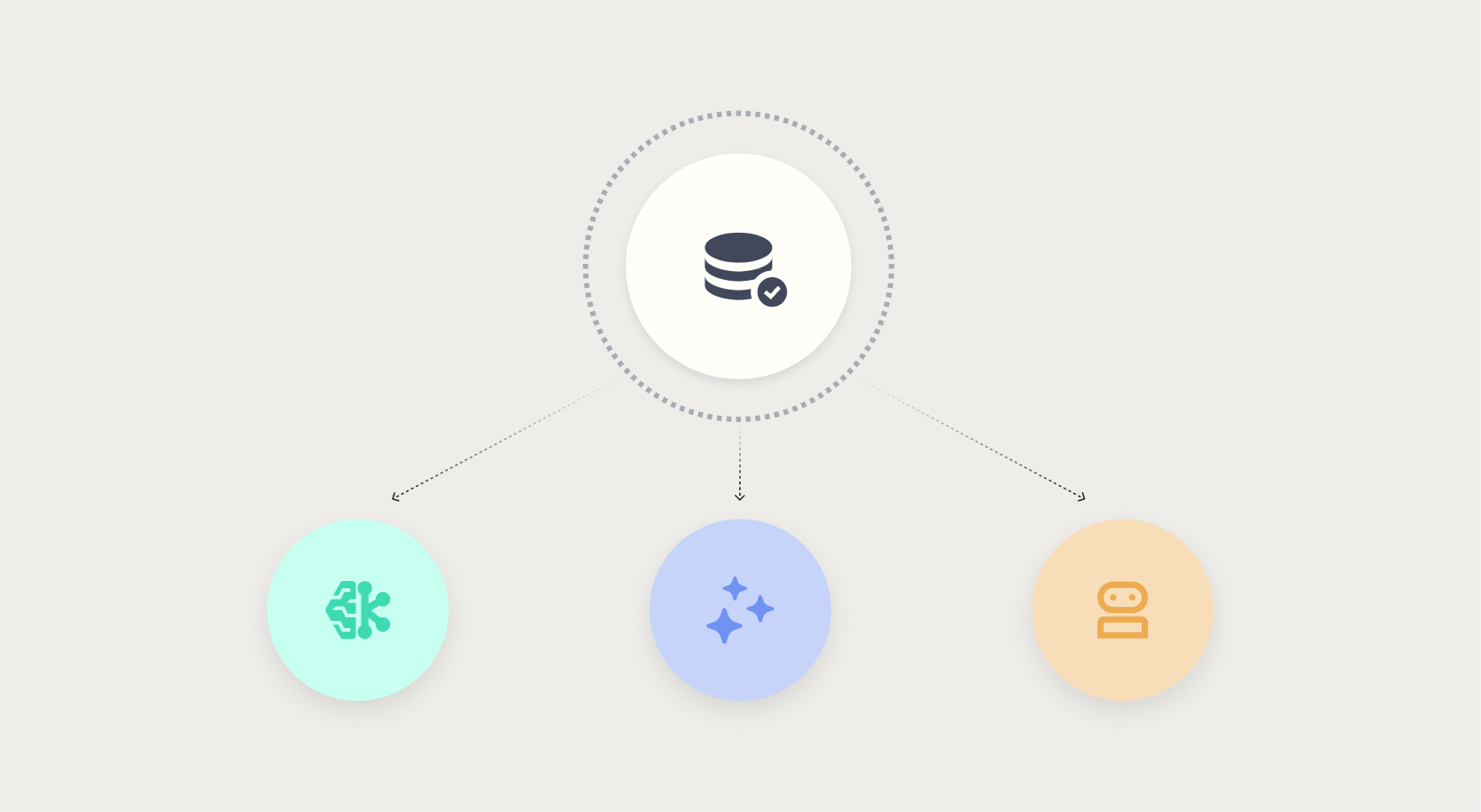

A machine learning platform is an integrated environment for data ingestion and preparation, model development, deployment, monitoring, and MLOps. Unlike standalone frameworks like TensorFlow or PyTorch, a platform handles the full lifecycle, not just modeling.

Enterprise ML platforms serve data scientists building models, IT teams enforcing security, compliance teams auditing decisions, and business analysts consuming predictions without writing code.

When these groups operate on disconnected tools, you get duplicated work, inconsistent governance, compliance gaps, and models that never reach production. The platform's job is to close those gaps by putting everyone on the same governed surface.

.png?width=928&height=620&name=Thumbnail%20link%20(2).png)

.png)