Plugin information

| Version | 1.2.1 |

|---|---|

| Author | Dataiku (Arnaud d’Esquerre, Nicolas Dalsass) |

| Released | 2020-07-15 |

| Last updated | 2025-06-09 |

| License | Apache 2.0 |

| Source code | Github |

| Reporting issues | Github |

What is ONNX?

ONNX is an open format built to represent machine learning models for scoring (inference) purposes. ONNX defines a common set of operators – the building blocks of machine learning and deep learning models – and a common file format to enable AI developers to use models with a variety of frameworks, tools, runtimes, and compilers. More info here

Description

This plugin aims at offering an easy way to deploy deep learning models to various systems with the ONNX runtime.

You can learn more about the languages, architectures and hardware accelerations supported by ONNX runtime here.

It offers the conversion of two kinds of models:

- Visual Deep Learning models trained with DSS

- Keras

.h5 models.

Note that for Visual Deep Learning models, the plugin will export the model itself but not the features handling defined in DSS.

This plugin contains one recipe and one macro for each conversion type.

Visual Deep Learning models trained with DSS:

Keras .h5 models:

Installation Notes

The plugin can be installed from the Plugin Store or via the zip download (see installation panel on the right).

How to use the Macros ?

Convert saved model to ONNX macro

- (If you don’t already have one) create a DSS python3 code environment

with the following packages (i.e. packages needed for Visual Deep Learning with Tensorflow>=2.8.0):

tensorflow>=2.8.0,<3.0

scikit-learn>=0.20,<0.21

scipy>=1.2,<1.3

statsmodels==0.12.2

Jinja2>=2.11,<2.12

MarkupSafe<2.1.0

itsdangerous<2.1.0

flask>=1.0,<1.1

pillow==8.4.0

cloudpickle>=1.3,<1.6

h5py==3.1.0 - Train a Visual Deep learning model in DSS with this code environment (here is a tutorial to help you get started)

- Install this plugin

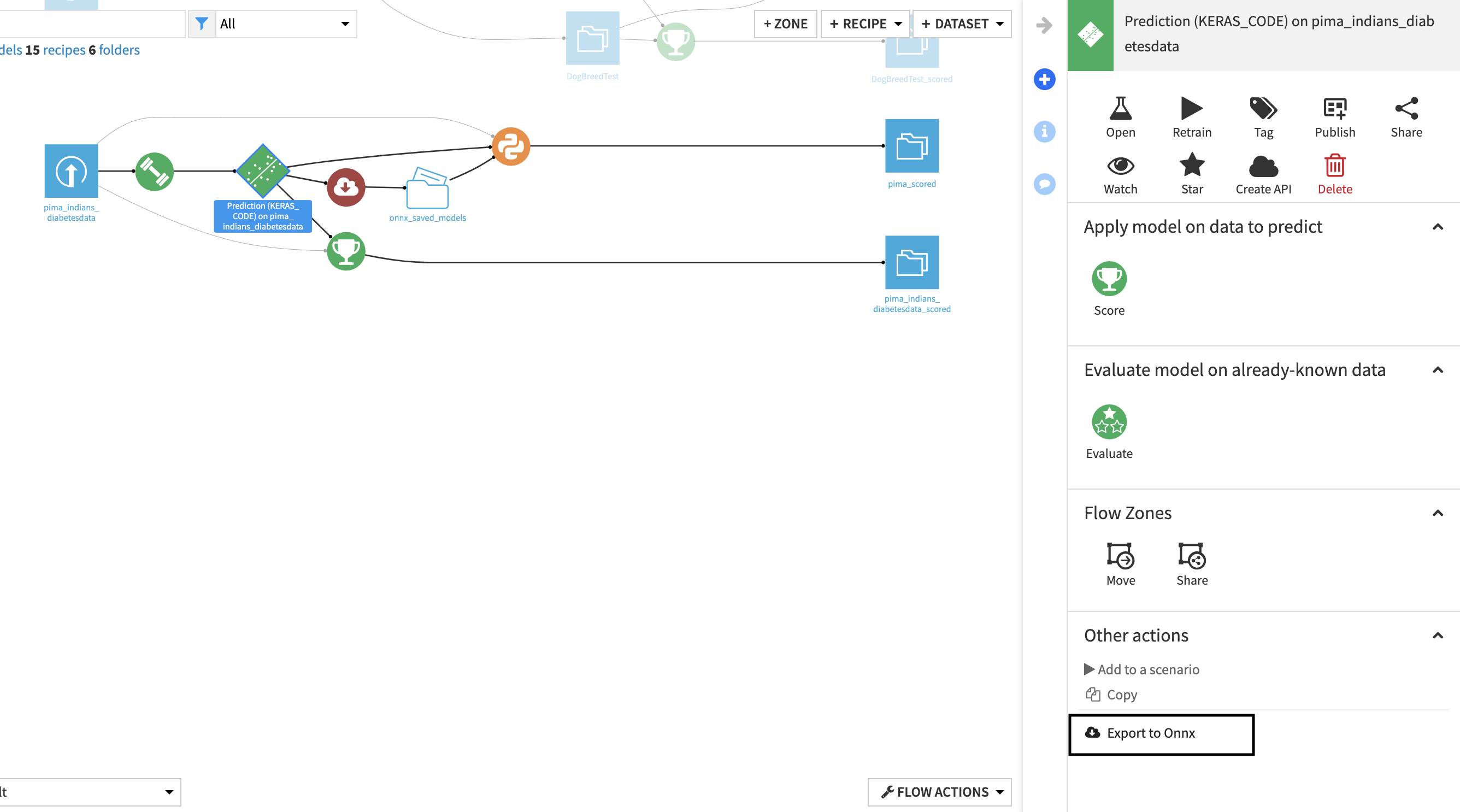

- Go the flow

- Click on the saved model

- In the right panel in the

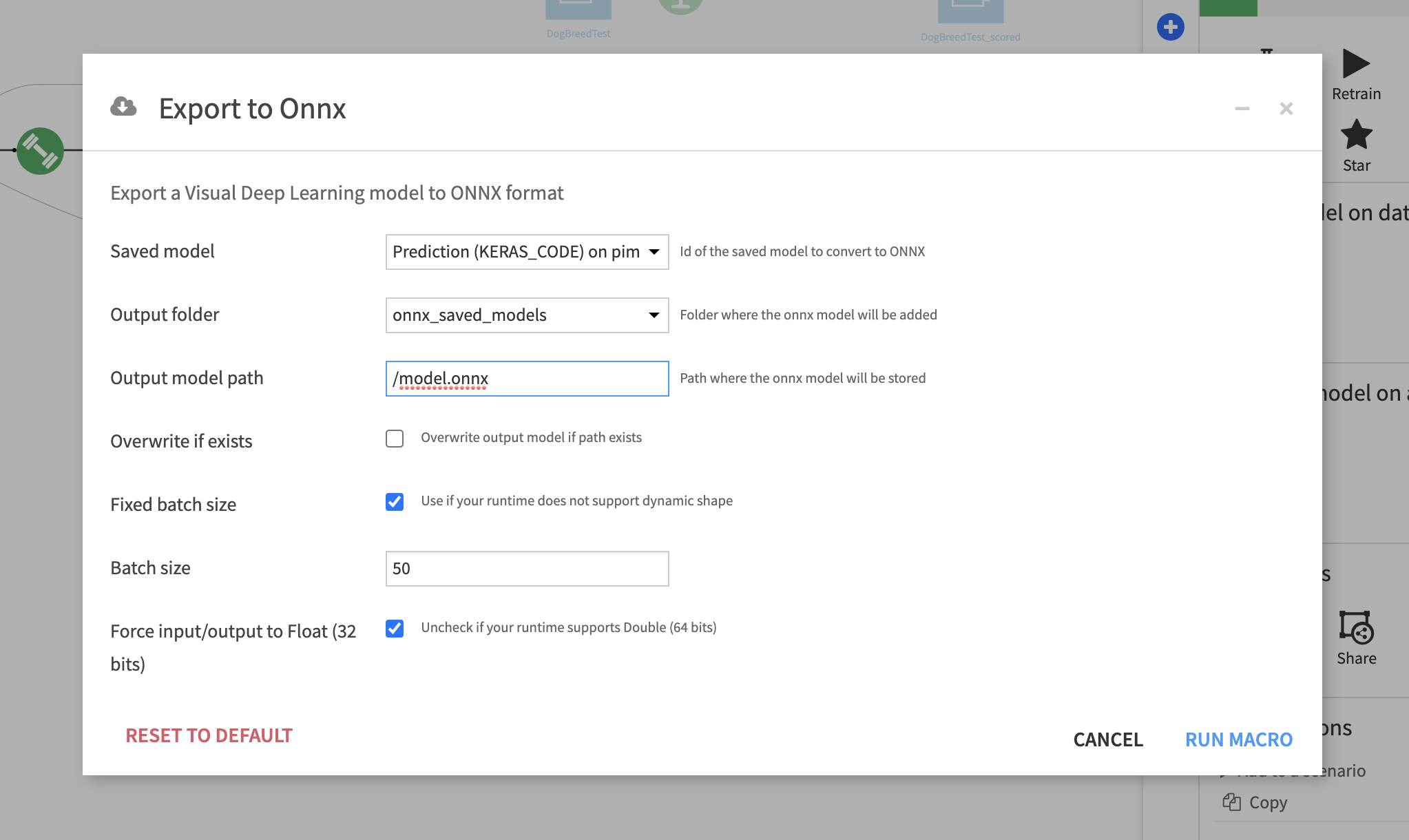

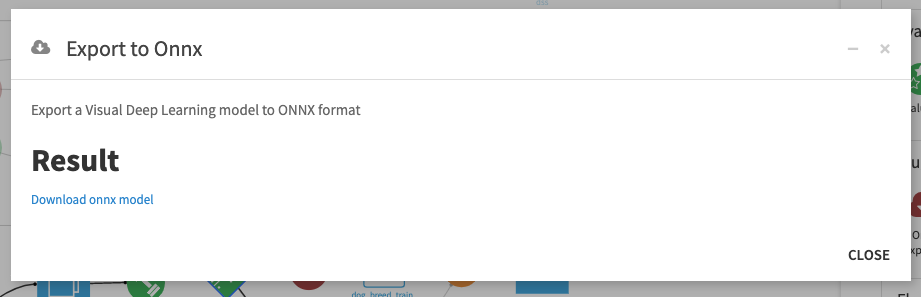

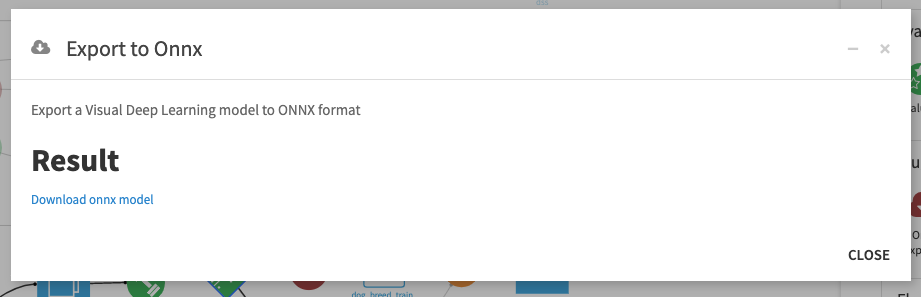

other actions section, click onExport to ONNX - Fill in the parameters (details below)

- Click on

RUN MACRO - Click on

Download ONNX modelto trigger the download. The model has also been added to the output folder

Available parameters

Saved model(DSS saved model): Visual Deep Learning model trained in DSS to convertOutput folder(DSS managed folder): Folder where the ONNX model will be addedOutput model path(String): Path where the ONNX model will be storedOverwrite if exists(boolean): Whether the model should overwrite the existing file at same path (if it already exists)Fixed batch size(boolean): Some runtimes do not support dynamic batch size and thereefore the size should be specified during export.Batch size(int) [optional]: Batch size of the model’s inputForce input/output to Float (32 bits)(boolean): Some runtimes do not supportDouble. Uncheck if your runtime supportsDouble

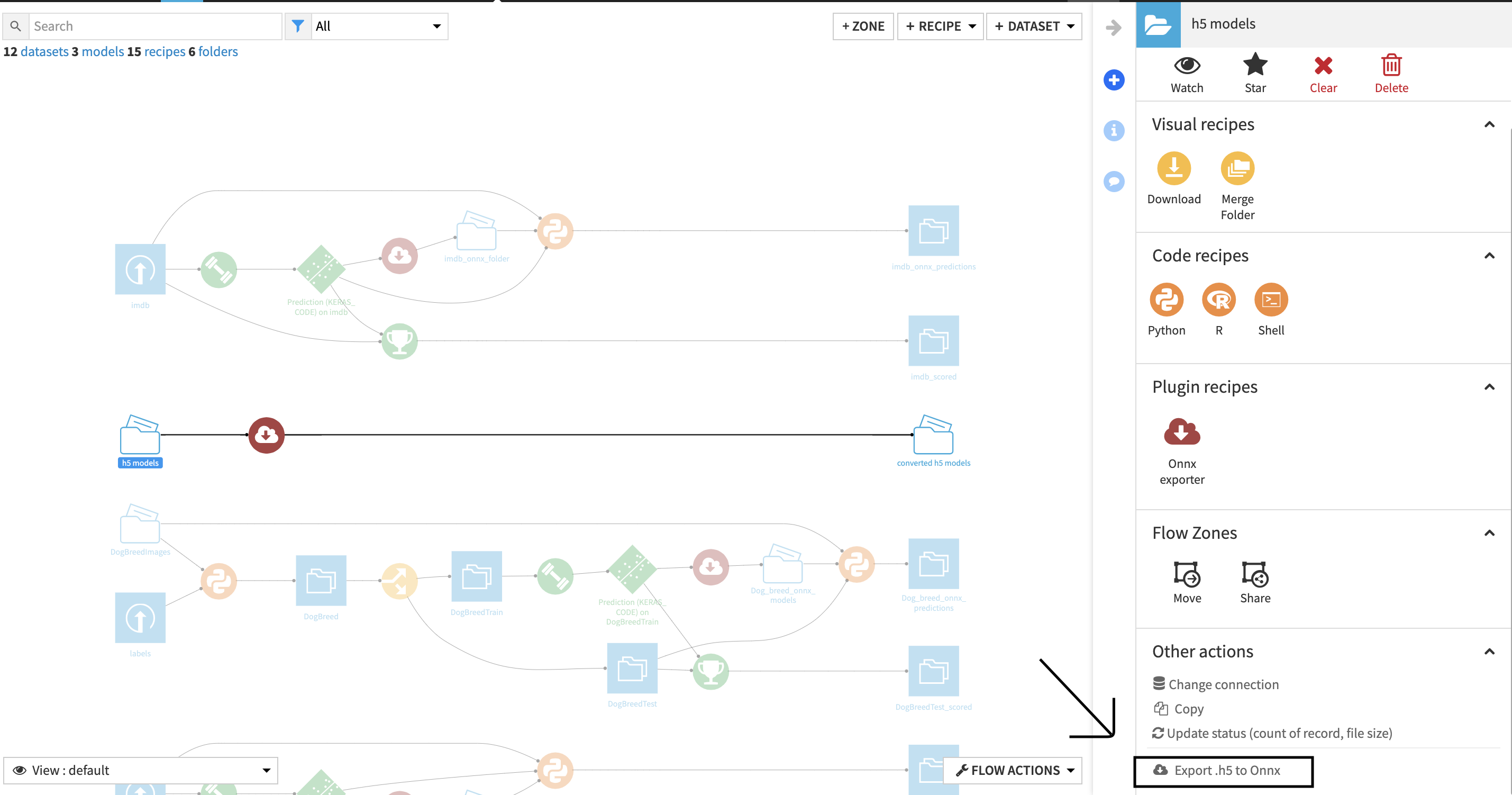

Convert Keras .h5 model to ONNX macro

- Put a .h5 model file obtained through Keras’s model.save() method into a DSS Managed Folder

- Go to flow

- Click on the folder

- In the right panel in the

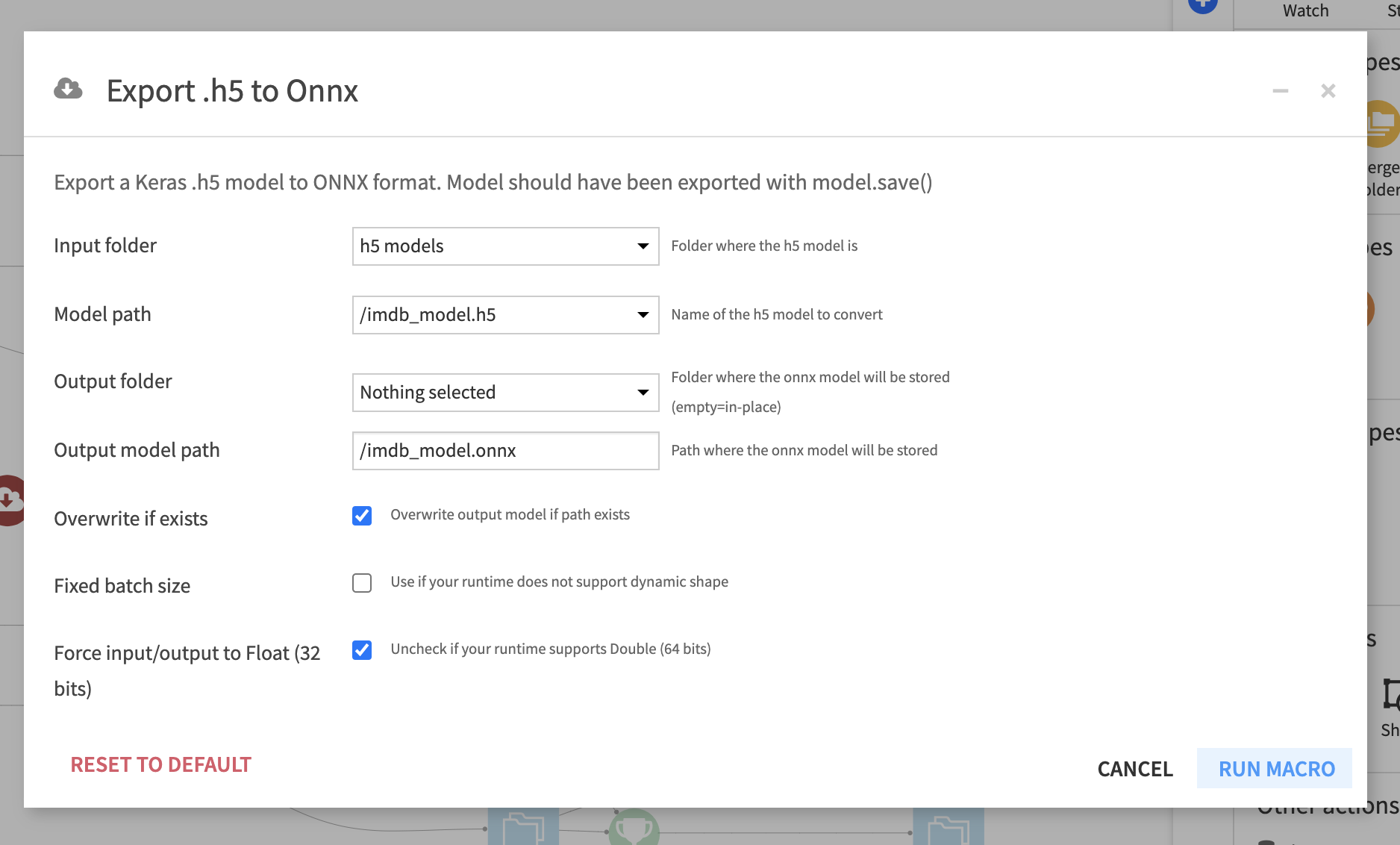

other actions section, click onExport .h5 to ONNX - Fill in the parameters (details below)

- Click on

RUN MACRO - Click on

Download onnx modelto trigger the download. The model has also been added to the output folder

Available parameters

Input folder(DSS managed folder): Folder where the .h5 model isModel path(String): Path to the .h5 model to convertOutput folder(DSS managed folder) [optional]: Folder where the ONNX model will be added.

IfOutput folderis left empty the model is added to the input folder.Output model path(String): Path where the ONNX model will be storedOverwrite if exists(boolean): Whether the model should overwrite the existing file at same path (if it already exists)Fixed batch size(boolean): Some runtimes do not support dynamic batch size and thereefore the size should be specified during export.Batch size(int) [optional]: Batch size of the model’s inputForce input/output to Float (32 bits)(boolean): Some runtimes do not supportDouble. Uncheck if your runtime supportsDouble

The macros are also available in the Macro menu of a project.

How to use the Recipes ?

Convert saved model to ONNX recipe

- Create (if you don’t already have one) a DSS python3 code env

with the following packages (i.e. packages needed for Visual Deep Learning with Tensorflow>=2.8.0):

tensorflow>=2.8.0,<3.0

scikit-learn>=0.20,<0.21

scipy>=1.2,<1.3

statsmodels==0.12.2

Jinja2>=2.11,<2.12

MarkupSafe<2.1.0

itsdangerous<2.1.0

flask>=1.0,<1.1

pillow==8.4.0

cloudpickle>=1.3,<1.6

h5py==3.1.0 - Train a Visual Deep learning model in DSS with this code env

- Create a managed folder by clicking on

+ DATASET > Folder - Install this plugin

- Go the flow

- Click on the

+ RECIPE > ONNX exporterbutton - Click on the

Convert saved modeloption in the modal - Choose the saved model you just trained as input and the folder you created as output

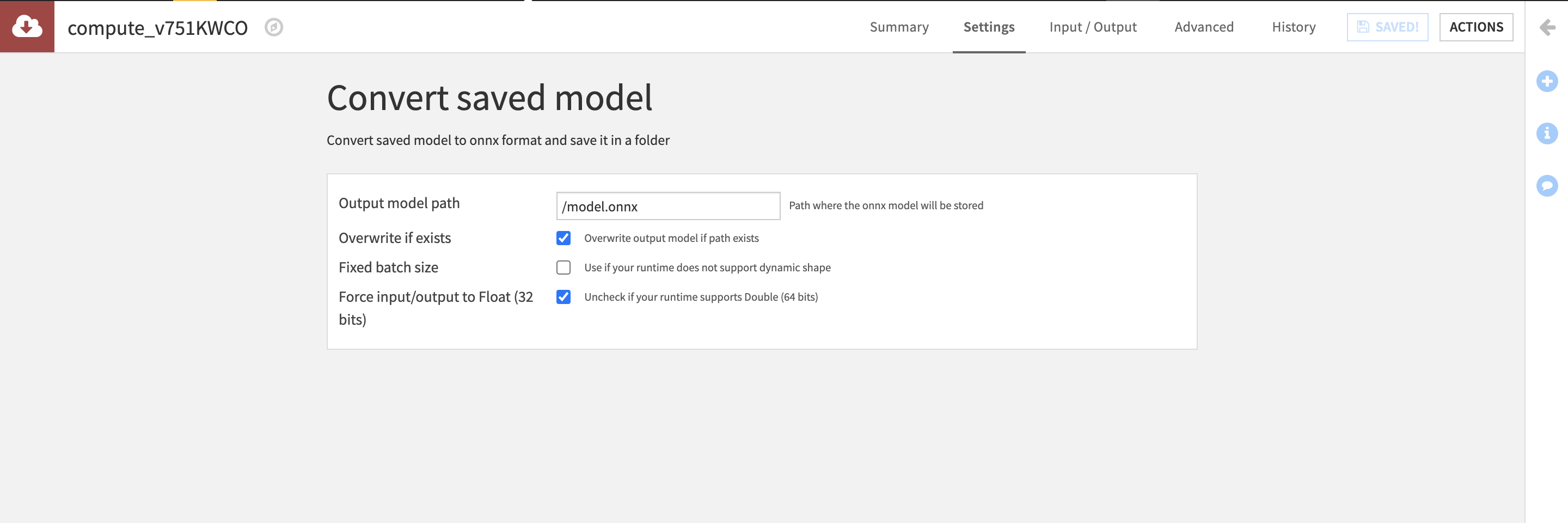

- Fill in the parameters on the recipe page (details below)

Available parameters

Output model path(String): Path where the ONNX model will be storedOverwrite if exists(boolean): Whether the model should overwrite the existing file at same path (if it already exists)Fixed batch size(boolean): Some runtimes do not support dynamic batch size and thereefore the size should be specified during export.Batch size(int) [optional]: Batch size of the model’s inputForce input/output to Float (32 bits)(boolean): Some runtimes do not supportDouble. Uncheck if your runtime supportsDouble

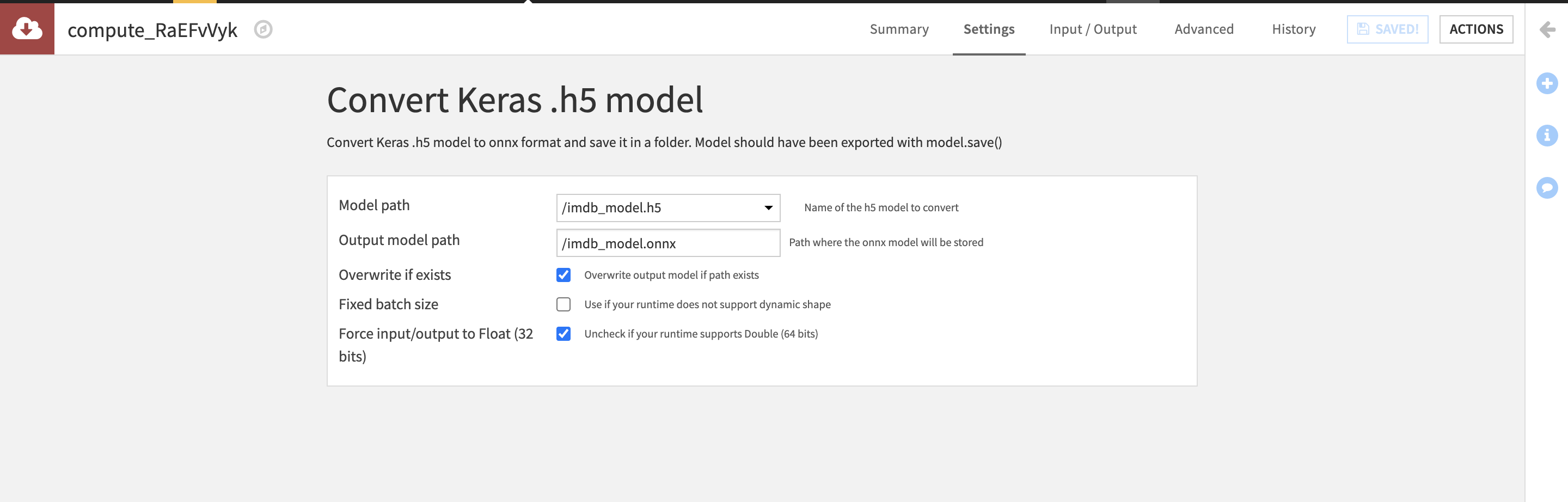

Convert Keras .h5 model to onnx recipe

- Put a .h5 model file obtained through Keras’s model.save() method into a DSS Managed Folder

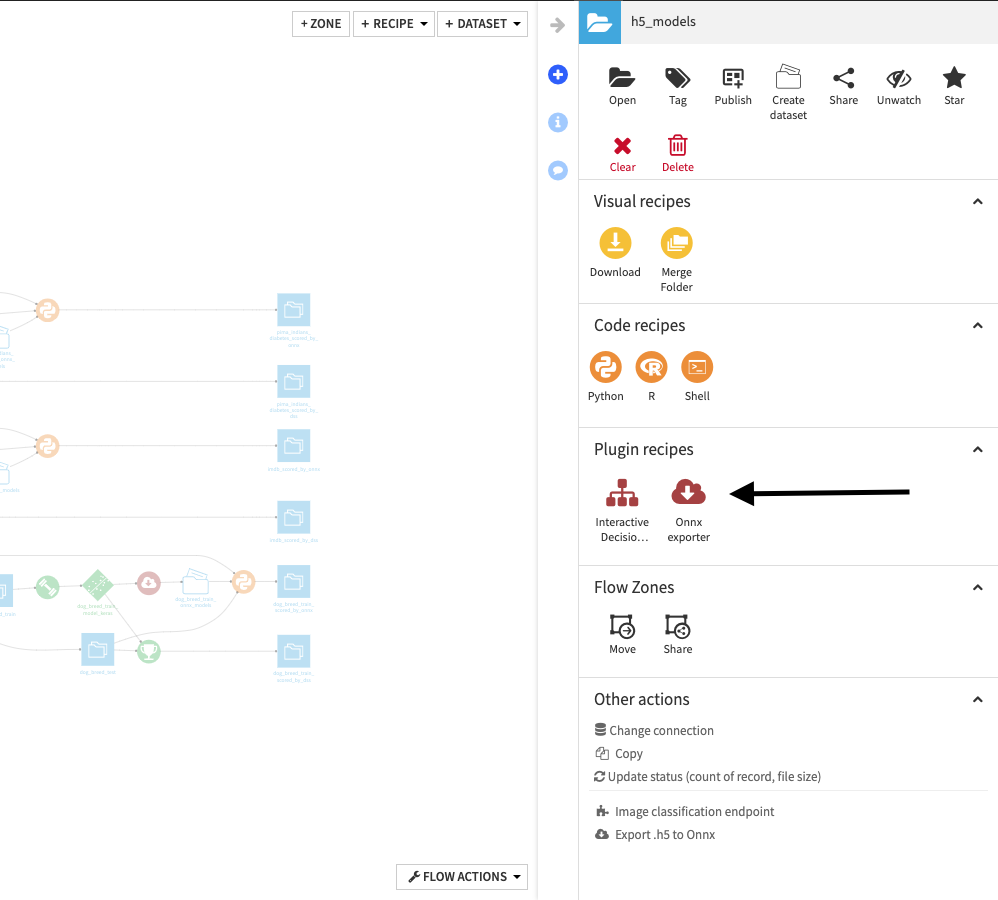

- Go to flow

- Click on the folder

- In the right panel in the Plugin recipes section, click on

ONNX exporter - Click on the

Convert Keras .h5 modeloption in the modal - Fill in the parameters (details below)

Available parameters

Model path(String): Path to the .h5 model to convertOutput folder(DSS managed folder) [optional]: Folder where the ONNX model will be added.

IfOutput folderis left empty the model is added to the input folder.Output model path(String): Path where the ONNX model will be storedOverwrite if exists(boolean): Whether the model should overwrite the existing file at same path (if it already exists)Fixed batch size(boolean): Some runtimes do not support dynamic batch size and thereefore the size should be specified during export.Batch size(int) [optional]: Batch size of the model’s inputForce input/output to Float (32 bits)(boolean): Some runtimes do not supportDouble. Uncheck if your runtime supportsDouble