Scale machine learning impact

Break down silos between teams and turn individual models into shared, production-ready ML. Dataiku enables more people to build, deploy, and improve models within enterprise standards.

Production ML, scaled across teams

Standardize, deploy, and monitor machine learning in one governed environment.

One environment from exploration to production

Bring notebooks, visual workflows, autoML, and deployment together in one governed environment so models move forward without handoffs or fragmentation.

Production-ready data, by default

Standardize data preparation with AI-assisted workflows that document, version, and govern every step ensuring consistent, trusted inputs at scale.

Flexible tools, shared standards

Teams work how they prefer, no-code, low-code, and full-code, enforcing shared practices for features, validation, and deployment across projects.

Production-ready models

Standardize how models are packaged, deployed, and monitored so ML work reliably reaches production.

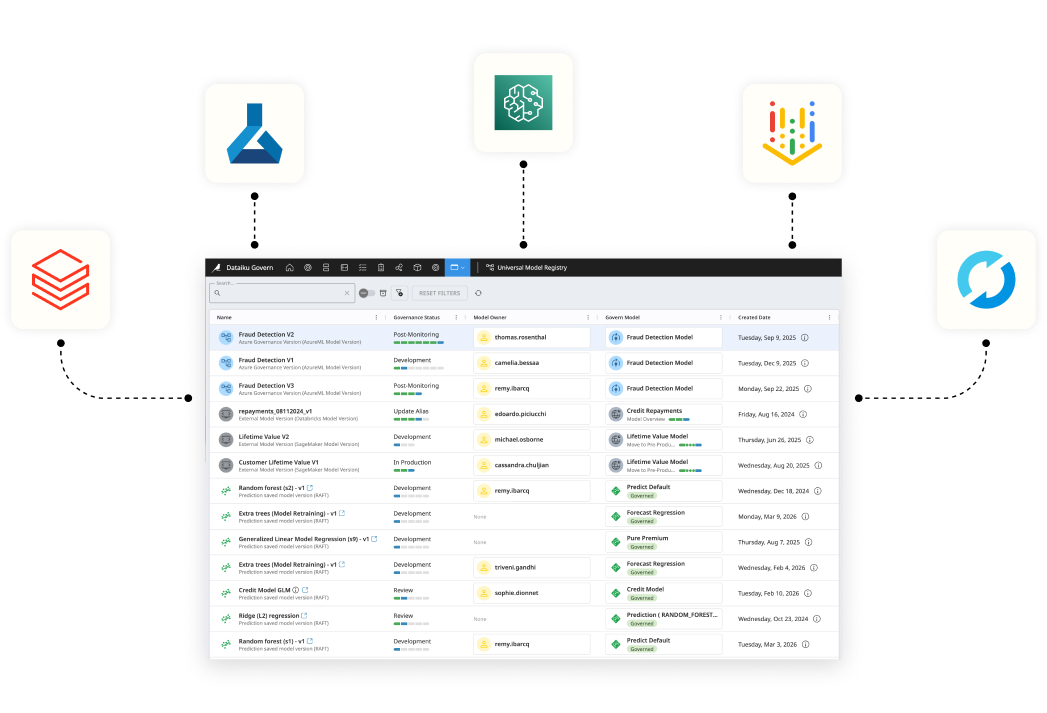

Governed, trusted, and scalable data science

Apply enterprise standards across ML-assisted data preparation by default governing automation, approvals, and monitoring as usage scales.

Governance across projects

Apply access controls, approvals, and documentation consistently, no matter who builds the model.

Explainability and fairness

Assess, validate, and check against all biaises with built-in deep explainability.

Scale safely over time

Ensure models remain reliable, explainable, and compliant as usage grows.

Frequently asked questions

Dataiku unifies data preparation, feature engineering, model development, deployment, and monitoring in a single governed platform. This eliminates tool fragmentation and accelerates production-ready machine learning at enterprise scale.

Yes. Teams can use visual data prep and AutoML, low-code workflows, or full-code notebooks (Python, R, SQL). This flexibility enables collaboration across analysts, data scientists, and engineers within the same environment.

Models are operationalized through automated deployment pipelines with built-in versioning, monitoring dashboards, drift detection, and retraining workflows, ensuring reliable and governed MLOps practices.

The platform supports enterprise feature store integration, scalable compute environments, forecasting and time series modeling, and structured evaluation frameworks to power predictive analytics use cases.

Built-in lineage, experiment tracking, and explainable AI capabilities help teams understand predictions, validate fairness, and maintain trust in data-driven decision-making.