A Unified Foundation That Scales

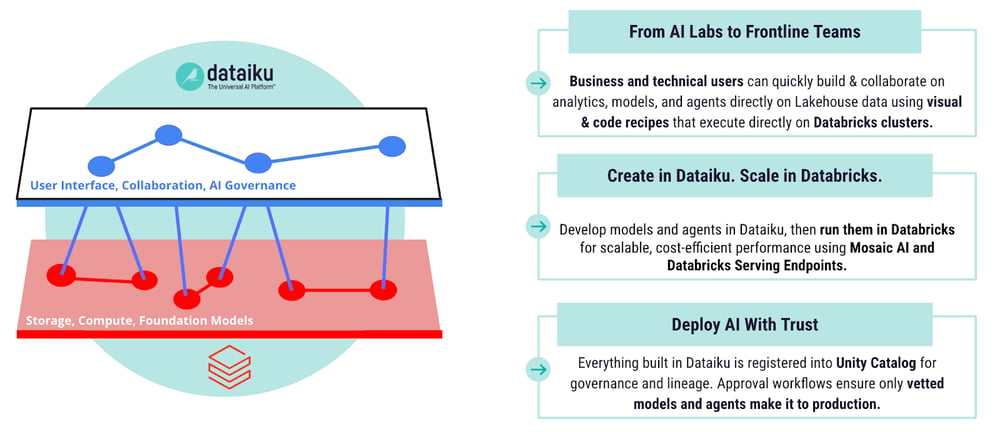

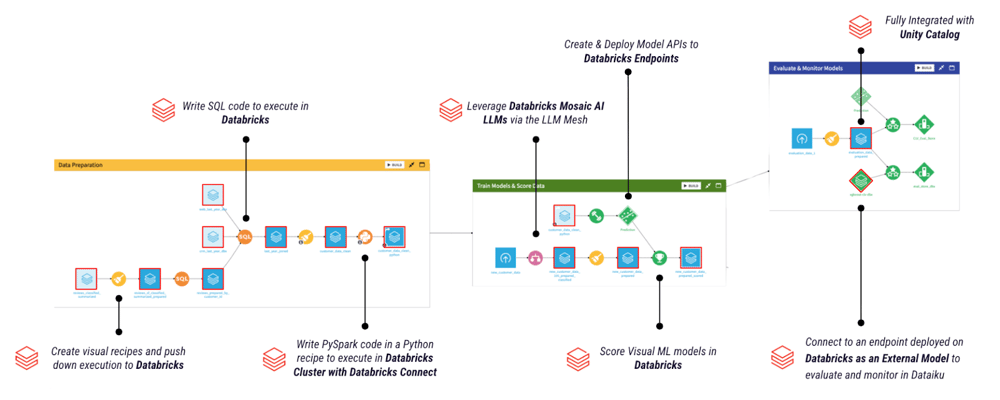

The Dataiku and Databricks integration is designed for scale, elasticity, and trust. Through native Delta Lake connectivity and pushdown compute, teams can process massive datasets directly on Databricks while orchestrating visual or code-based workflows in Dataiku. Computation happens where the data lives, eliminating duplication and minimizing risk.

Databricks Unity Catalog centralizes permissions, lineage, and auditing for data and models. Dataiku Govern layers on structured approvals, end-to-end lineage, and documentation across projects. Together, they ensure that scalability never compromises accountability.

This architecture lets enterprises expand AI operations without increasing operational overhead, giving IT the visibility and control required while allowing business and data teams to work independently within approved guardrails. The result is a scalable, governed approach to enterprise AI.