The Dataiku LLM Mesh

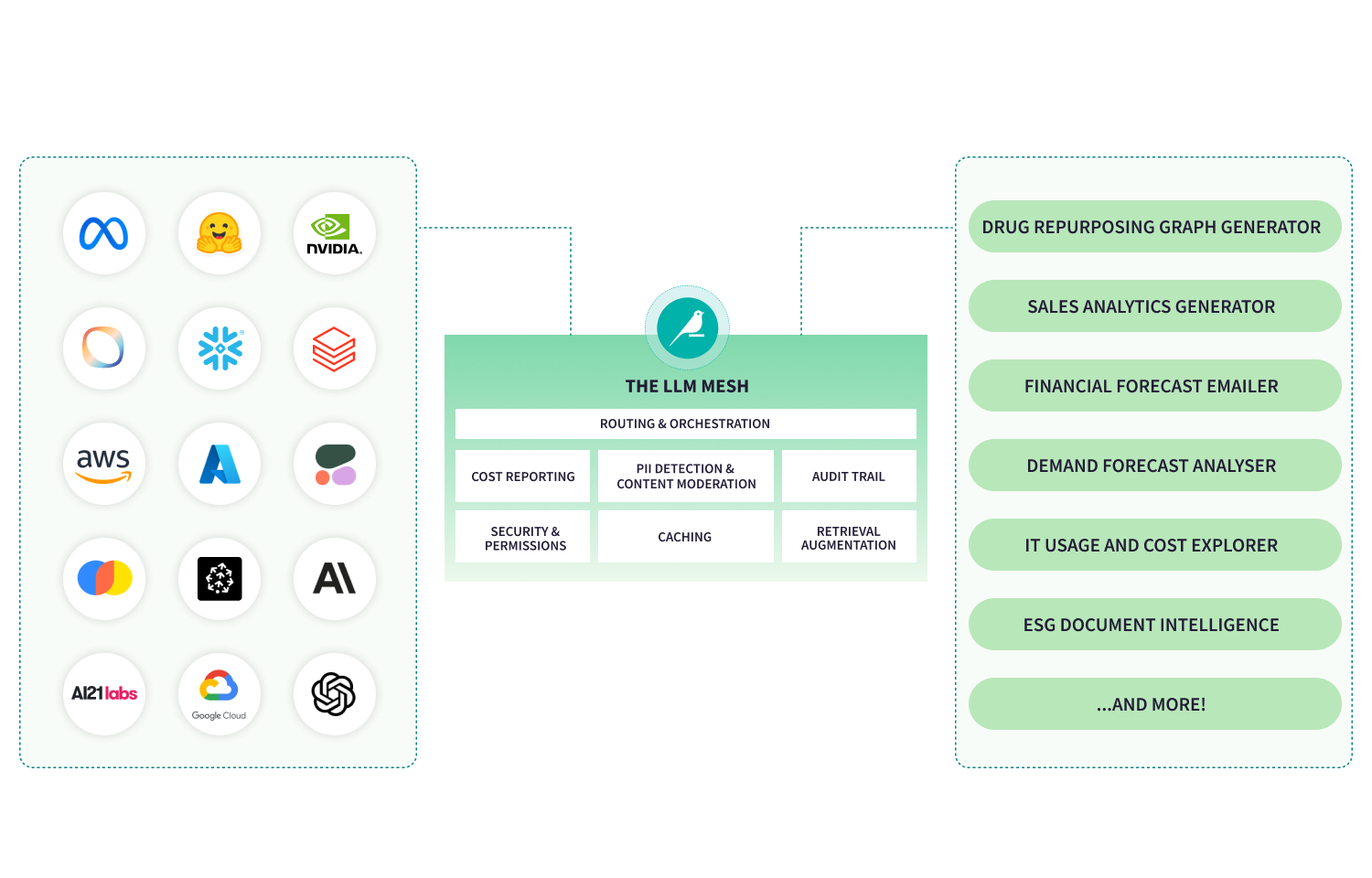

The Dataiku LLM Mesh centralizes how teams connect to, manage, and govern LLM services to enable multi-model strategies, cost oversight, performance routing, and enterprise-grade safety without lock-in.

Enforce a secure gateway

Model choice without rearchitecting

Connect to a broad ecosystem of LLM providers and self-hosted models through a secure, agnostic gateway. Switch or mix models based on cost, performance, and compliance needs without breaking your applications.

Enterprise-ready control and compliance

Enforce policies via a governed API layer that manages routing, screening, moderation, cost tracking, and auditing, reducing risk while preserving agility.

Performance & cost optimization

Monitor LLM usage and round-trip performance in real time, cache frequent responses for efficiency, and pick the right model for each task, avoiding runaway spend while meeting SLAs.

One secure gateway for every LLM

The LLM Mesh centralizes how your organization connects to large language models. Route all LLM traffic through a secure, governed layer that decouples applications from specific providers. Adopt new models, switch vendors, or balance performance and cost, without rewriting applications. Teams move fast. IT retains control. Your architecture stays future-proof.

Govern, optimize, and scale with confidence

The LLM Mesh embeds policy enforcement directly into the LLM connection layer. Control routing, enforce usage limits, monitor spend, and audit activity, all in one place. With real-time visibility into performance and cost, organizations can choose the right model for each task, prevent runaway spend, and scale GenAI responsibly across the enterprise.

See more GenAI & agentic features

Dataiku Agent Hub

Build, deploy, and manage AI agents grounded in your enterprise data, with governance built in from the start.

LLM Guard Services

Standardize agent performance and alignment checks across use cases to maintain reliability and trust.

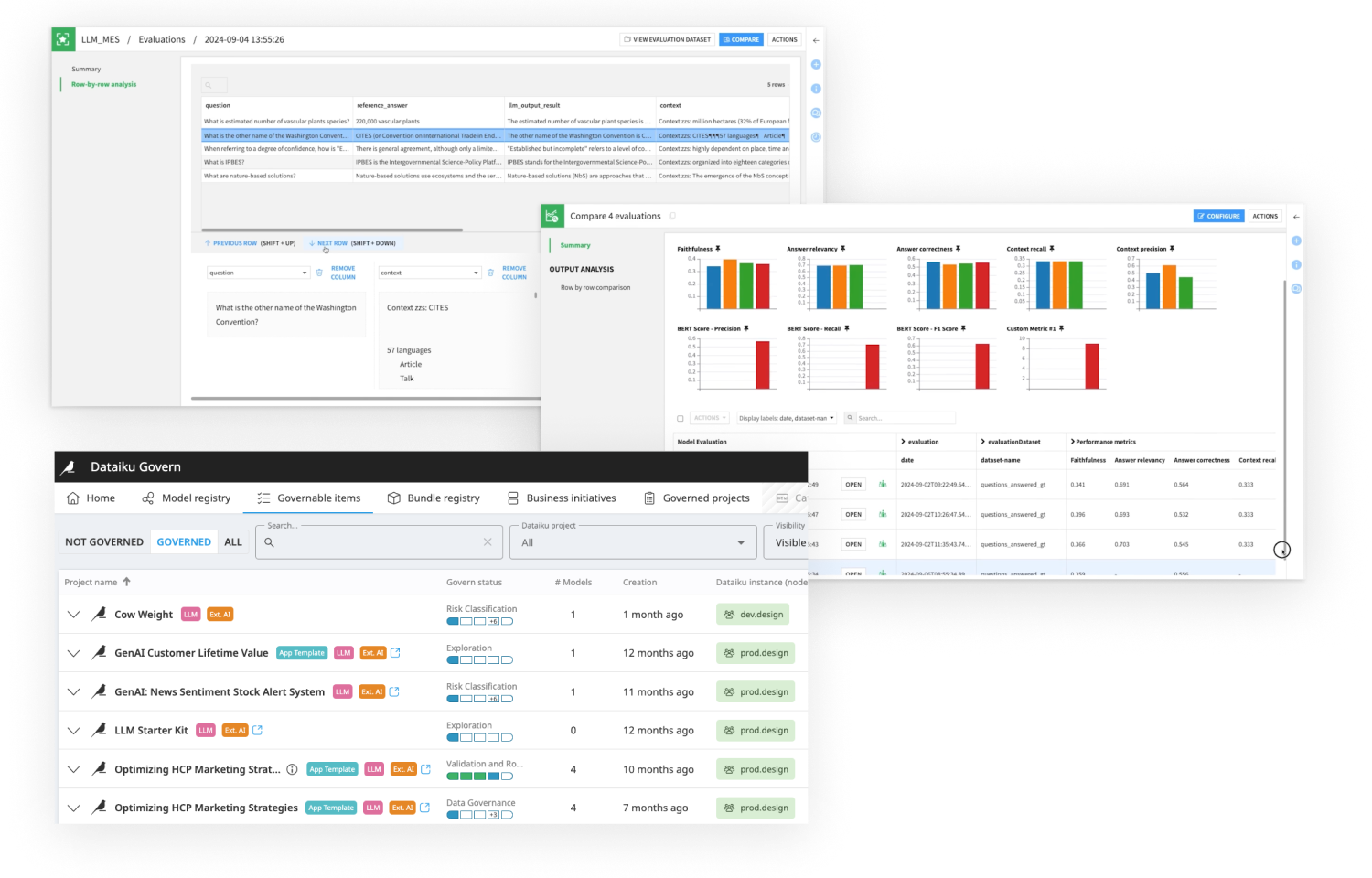

GenAIOps

Build, deploy, and monitor generative AI applications with full visibility and control.

Loved by customers and recognized by analysts

“The platform is intuitive, collaborative, and streamlines workflows from data prep to model deployment. Dataiku has truly transformed how we handle data!”

Data scientist

Retail