GenAIOps with Dataiku

Manage LLMs and AI agents at enterprise scale with built-in controls for compliance, cost, and data security across every stage of the AI lifecycle.

Govern all things GenAI

Connect to any LLM

Test, swap, and deploy models from providers like OpenAI, Anthropic, and AWS Bedrock, or self-hosted models on Hugging Face, without disrupting existing workflows or locking into a single vendor.

Control GenAI cost

Set budgets, track usage, and enforce cost limits across every LLM and AI agent in your organization, so GenAI spend stays predictable as adoption grows.

Protect sensitive data

Automatically detect and redact sensitive data, moderate content, and enforce compliance policies across every GenAI application before issues reach production.

Know exactly how your LLMs and agents are performing

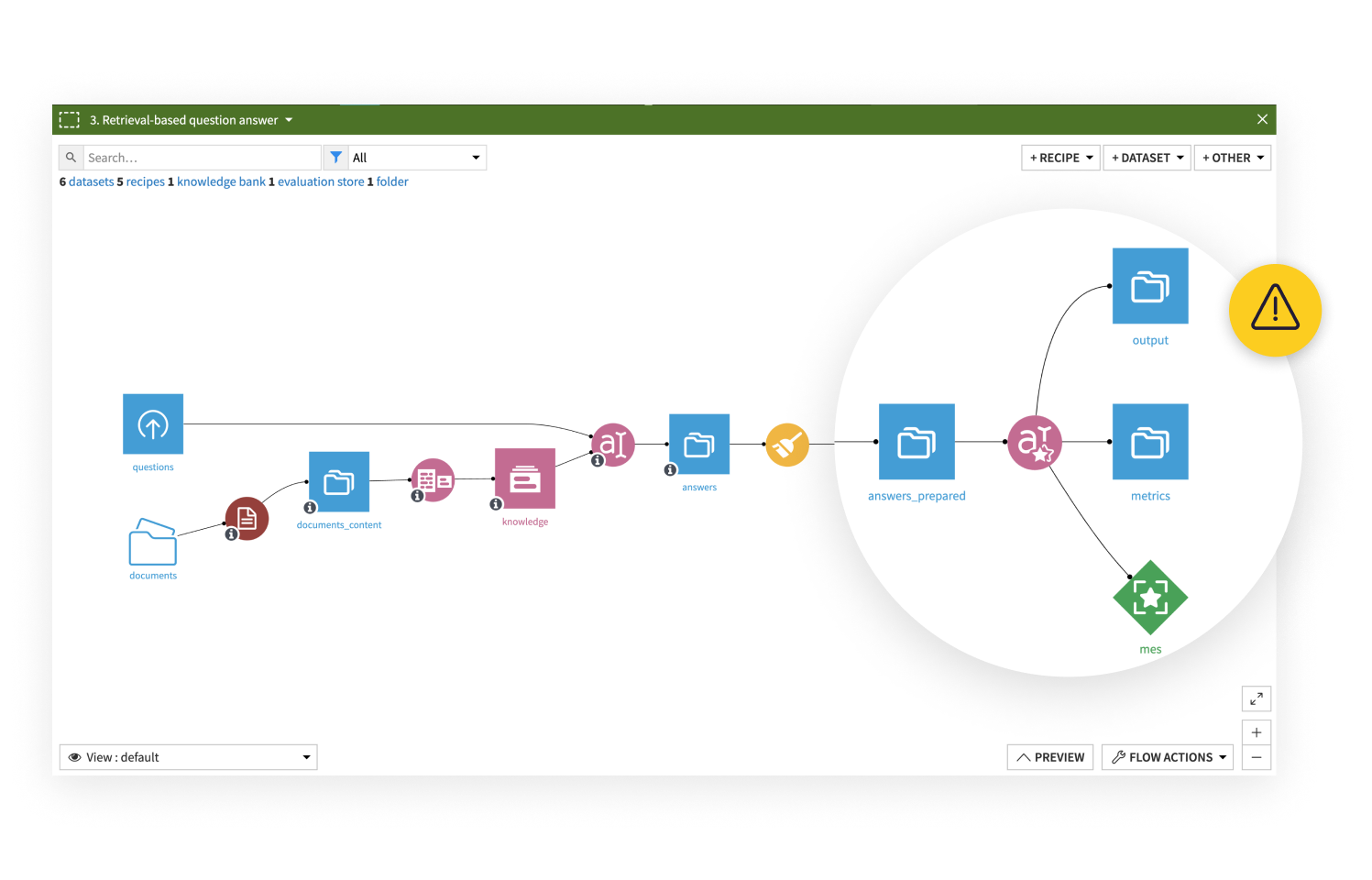

Evaluate and monitor LLM and agent performance continuously using automated metrics, statistical scoring, and LLM-as-a-judge evaluation, including RAG applications. Compare model runs side by side, set alerts when performance drops, and bring in human reviewers to validate outputs when needed.

Fix problems quickly, without starting over

When a GenAI application underperforms, Dataiku keeps everything needed to investigate and improve in one place. Update prompts in Dataiku Prompt Studios, adjust pipelines visually, and use the Trace Explorer to audit every action an agent took. For RAG applications, teams can trace exactly how source documents were retrieved and used, making it straightforward to identify where things broke down.

See more GenAI features

The Dataiku LLM Mesh

Connect to any LLM provider or self-hosted model, with centralized visibility and control across every connection.

Dataiku Agent Hub

Build, deploy, and manage AI agents grounded in your enterprise data, with governance built in from the start.

Machine learning with Dataiku

Scale model development across your organization with a single, governed environment for building and deploying ML.

Loved by customers and recognized by analysts

“The platform is intuitive, collaborative, and streamlines workflows from data prep to model deployment. Dataiku has truly transformed how we handle data!”

Data scientist

Retail